As artificial intelligence (AI) adoption continues to grow exponentially, governments around the world are wrestling with how to enable secure and safe use of AI software, products, and services. AI has the potential to impact nearly every industry and aspect of society and without the counterbalances of governmental intervention, could be misused, abused, and lead to serious risks to safety, privacy, and security.

Every government is approaching AI security differently. In the U.S., there’s been the AI Executive Order (EO), along with guidelines from sources such as the National Institute of Standards and Technology (NIST) and the Cybersecurity Infrastructure Security Agency (CISA). So far, the U.S. approach has been focused on enabling innovation and fostering AI adoption while emphasizing the need for security and doing so voluntarily.

The EU is taking a different approach, recently passing first-of-its-kind legislation that outright bans some potential use cases for AI and also imposes financial penalties for violating the act. In this analysis, I’ll take a look at the EU AI Act and its implications not only for the EU itself but for the U.S. and other countries whose products and services may reach the EU market.

Overview

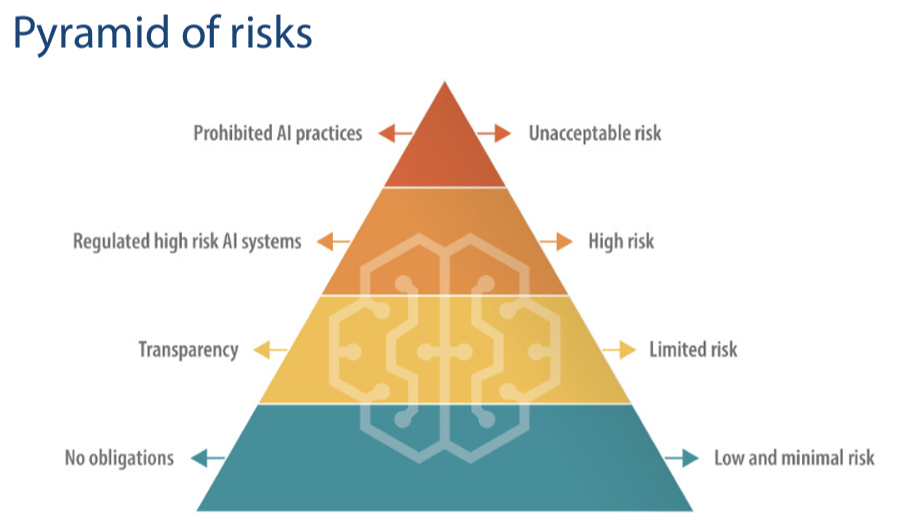

The EU AI Act may be catching some by surprise, but it has been working its way through the legislative process for a bit of time and is building on previous position papers and publications. It applies to all AI systems developed, used, or sold in the EU. It takes a risk-based approach, with various risk levels depending on the use cases and in some cases prohibits specific uses of AI outright.

The EU AI Act imposes specific requirements around transparency, record keeping, testing, and reporting as well as financial penalties for violations that may occur. Given it applies to all AI products developed, used, or sold in the EU it has a global reach, despite being passed in the EU. All firms using AI who have any involvement with the EU market need to be aware of it, much like the General Data Protection Regulation (GDPR) before it.

Risk-Based Approach

The EU AI Act takes a risk-based approach, with varying and increasing levels of risk, each with defined use cases for applicability.

Unacceptable

As mentioned above, the EU AI Act outright bans some practices and uses of AI. These include practices that are deemed a clear threat to people’s safety, livelihood, and rights. Examples include manipulative techniques, exploiting vulnerable groups, or uses by public authorities for “social scoring” purposes.

High

High risks are defined as those that create an adverse impact on an individual’s safety and fundamental rights. Examples include products falling under the EU’s health and safety legislation, as well as management of critical infrastructure, law enforcement, biometrics, and education, among several others. Providers of goods and services that meet these risk categories must register in an EU-wide database and also conduct self-assessments, and in some cases be assessed by a third party for risk considerations.

Limited

Limited risk systems are those that interact with humans such as chatbots or emotional recognition systems and systems that generate and manipulate images and text. These systems would be required to meet the transparency requirements the EU AI Act lays out.

Low and Minimal

Last but not least, are low and minimal-risk AI systems, which the act says will be encouraged to align with a forthcoming code of conduct that is voluntary for AI system providers to protect the safety and security of individuals using the systems or whose data is involved.

Financial Consequences

The EU AI Act states that member states will appoint a competent authority to oversee the implementation and enforcement of the act. These authorities will help AI system providers take corrective action for violations of the act and in some cases even ensure products that violate the act are removed from the market entirely. There are also financial ramifications, such as fines up to 35 million Euros or up to 7% total worldwide revenues. For large firms, these numbers can obviously become increasingly significant.

Challenges for CISOs and Cybersecurity Leaders

This act presents a series of challenges for CISOs and cybersecurity leaders at organizations whose products touch the EU market. CISOs will need to be familiar with how their organization is using AI as part of their product or services. This includes understanding what data is involved, how the data is used, use cases for the product(s), and ensuring transparency is occurring with regard to user interaction. CISOs also must be familiar with testing requirements and the need to register in the EU-wide database and be able to categorize what risk-based threshold their organization’s product(s) fall under.

Failing to tackle these emerging requirements as organizations use AI will lead to risks such as legal, regulatory, financial, and reputational harm, all of which can negatively impact the business and its revenues and stakeholders. Security leaders must become familiar with the EU AI Act and ensure their C-suite peers and product teams are tracking these requirements to help enable the business securely and mitigate these various risks.